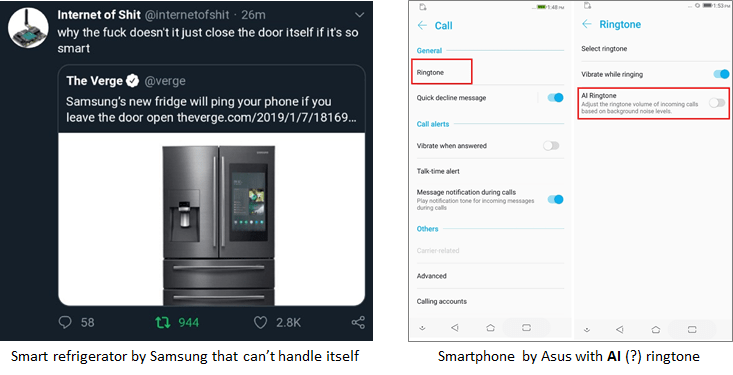

These days we are so gung-ho about new technologies (mainly AI) that we have almost stopped demanding for good quality solutions. We are so fond of this newness that we are ignoring flaws new technologies are carrying over.

This, in turn, is encouraging subpar solutions day by day and we are sitting on a pile of junk, which is growing faster than ever. The disturbing part of that is — those who are making subpar solutions, still, make us believe that machines will be wiser soon… When and how I wonder! More importantly, why should we tolerate poorly designed solutions until then?

Having a cool technology at disposal never meant to be a panacea and we should stop being carried away with it.

If AI is such a great thing (and I know it is, but not in its current form), why should we focus and limit its applications in solving problems? I would rather pre-empt problems and avoid them altogether, won’t that be a wise thing to do? How many technologies stop problems from becoming problems today?

I think we should avoid rushing towards and following the trends & hypes blindly. Rather become thoughtful and maintain our sanity in deciding where, when, and why we use emerging technologies, AI included.

When I mention of junk, I am referring to some of the subpar and trivial solutions that add low to no value. I am sure that the community would tend to disavow them but be known that they have already made their way to the crowd anyway.

Why do we need to challenge this kind of stuff?

Well, first, I am not against emerging technologies at all; rather I am a strong proponent. However, what I do not quite appreciate is quantity over quality. I think it often promotes false economics, and here is why…

- Simply pushing for more quantity (of tech solutions) without paying attention to quality (of the solutions) adds a lot of garbage out in the field.

- This garbage, when out there in the market creates disillusionment and in-turn adds to the resistance in healthy adoption.

- This approach (quantity versus quality) also encourages the bad practices by creators of AI at customer’s expenses, where subpar solutions are pushed down customers’ throats by leveraging their ignorance and an urge to give in to FOMO (fear of missing out).

Stuff like this happens during the hype cycle for any new/upcoming trend or technology. If this is happening with AI, there is nothing new per se.

The problem though is that flaws in subpar techs do not surface until it is too late! In many of the cases, the damage is already done and would be irreversible. Unlike many other technologies, where things simply work or do not work, there is a significant grey area which can change its shades over a period. Moreover, if we are not clear in which direction would that be, we end up creating a junk.

We not only need to challenge the quality of every solution but also improvise our approach towards the emerging technologies in general.

How can we encourage a better approach?

There is no golden rule or checklist as such for a better approach. Nonetheless, a few things I can suggest are…

Seeking transparency in solution

You would notice that people who make something often replicate their inner self in the output. A casual approach or thinking would encourage a casual output, and so would the meticulous thinking result in better output. Programmers who have clarity of mind and cleanliness in behaviour often create a clean and flawless program.

We must make people responsible and accountable for their output. In case of an AI, if it does a bad job, ask creators to explain and seek transparency. Hiding behind deep learning algorithms and saying, “AI did it!” will not cut it.

People will often tell you that knowing inner mechanics is not important for the users, only the output is. We must challenge this argument. For many other technologies, such as a car or microwave oven, this argument might hold true — but for AI, it does not!

For each action automation or AI takes, creators should be able to explain, why their technology did that!

Seeking quality & accountability of outcomes

People may (most likely) rely on technology and automation for their business-critical or life-critical functions. Bringing in people who would take accountability of outcomes seriously is often a better decision. Unless the application is for fancy or sheer playfulness, we need to put a responsible adult in charge.

Machine learning is essentially teaching a computer to label things with examples rather than with programmed instructions. In addition, an AI that is based on this learning can do wrong things only in two circumstances. First, if the fundamental logic of decision making programmed in it (by humans) is flawed i.e. bad programming, or the dataset it was trained on is incorrectly labelled (by humans) i.e. of bad data quality. In either case, the machine will do the wrong thing or do it wrong!

Here creators cannot just wash-off their hands and say, they do not know what went wrong when something goes awry. Humans need to take responsibility on embedding those wrong fundamentals and take accountability of outcomes.

The world, even today looks at parents if their child does something wrong, questioning their upbringing methods and practices. Then why cannot we see the same way to creators of AI solutions?

We should start assigning liability to creators of AI, much as we do now with guns, and make quality acceptance parameters stricter.

Being careful with MVP people

No doubt that a minimum viable product aka MVP is key to developing a successful product. However, treating MVP as MVP is very important.

The problem starts when someone sells you his or her work, which is still in progress, or has not achieved an acceptable working level yet! Their solution not working would be less of a problem than it is working against your goals would be.

The issue with many AI or automation solutions is that sometimes they do not break evidently. They create a leakage of some sort, which is not quite bad but not quite right either. This sort of grey output piles over a period before one can realize that they own a heap of junk. Getting rid of that junk or fixing the completely flawed system could become a nightmare.

MVP is good and important, but we should deal with solutions that are not ready for full live deployment with utmost care and skepticism.

Auditing your technology thoroughly

Contemporary solutions are more than simply packaged software these days. They are complex pieces of intertwined technologies. Evidently, older ways of ensuring that they are working as expected are not suitable anymore. Quality assurance methods such as unit testing, regression testing, etc. are not enough to qualify emerging technological solutions.

We rather need to take a systematic approach. Test the technology for real-life scenarios, possible and impossible (scenarios) both at the same level.

Thoroughly audit the system for what it has learnt, how and from whom, and then verify how that can affect you in the future. Do not just take creators’ word for it.

Most importantly — pull the plug if needed, it is too costly to keep going with bad quality automation and AI than to just shut it.

Preferring pre-emption over a fix

There are several solutions (for one problem) in the market, even now, in such a nascent stage. Not all the solutions could be a good match. Sometimes, their offerings are slightly different.

Some solutions can provide a fix to the problem as an after the fact (e.g. automated fixing of a broken machine), while some would provide an early indication (e.g. anomaly detection in machine performance). Some will help in improving problem-fixing performance while some will help in identifying problems early on.

Focus and prefer pre-emptive systems than fixers. Both are important, but if you must — prevention is always better than cure!

Avoiding ignorance from the top

People often leverage your ignorance to sell you something.

Getting educated on emerging technologies is quite important to avoid these miss-sells. Starting from the top is even more important. Executives should understand better as to what they are getting into.

They need to invest some time in acquiring this education to be able to ask the right questions at the right time/steps. Being more involved is necessary, simply learning some tech-lingo is not going to be enough. If you are not involved, nothing else can fix it, ever!

Adding feedback loop and sincerely learning from it

Unfortunately, there is a general absence of experience-based knowledge in the developer community so we cannot rely solely on the data and algorithms to know the right problems to solve. This is a great issue these days and neutral business perspective is often required to see things from objectivity lens.

My mention here of feedback loop does not relate to machine learning or AI system as such; it rather refers to the development and use value chain. When users provide feedback to creators and creators learn from it to adjust their creation, feedback serves better.

If creators consume feedback but never consider or act on it, it remains an open loop system. The open loop system is not a stable system.

Creators and users should ensure that an effective feedback loop is established and everyone in that loop is sincerely learning from it.

As more and more technology is democratized, the ability to create junk (knowingly or unknowingly) would increase manifold. While some will use wisdom in creating sensible solutions that are of great quality and useful, many others would do just the opposite.

We must brace ourselves; first to stop this junk from coming in, and second to tackle it, if it comes through!